Living: Main Street District

Working: Dallas, TX

Laundry: See above

This Week in Laundry I introduce my first lightpuppet – a successful failure.

For the past ten weeks I’ve been working hard on a prototype. I’ve been working hard, without guarantee of success.

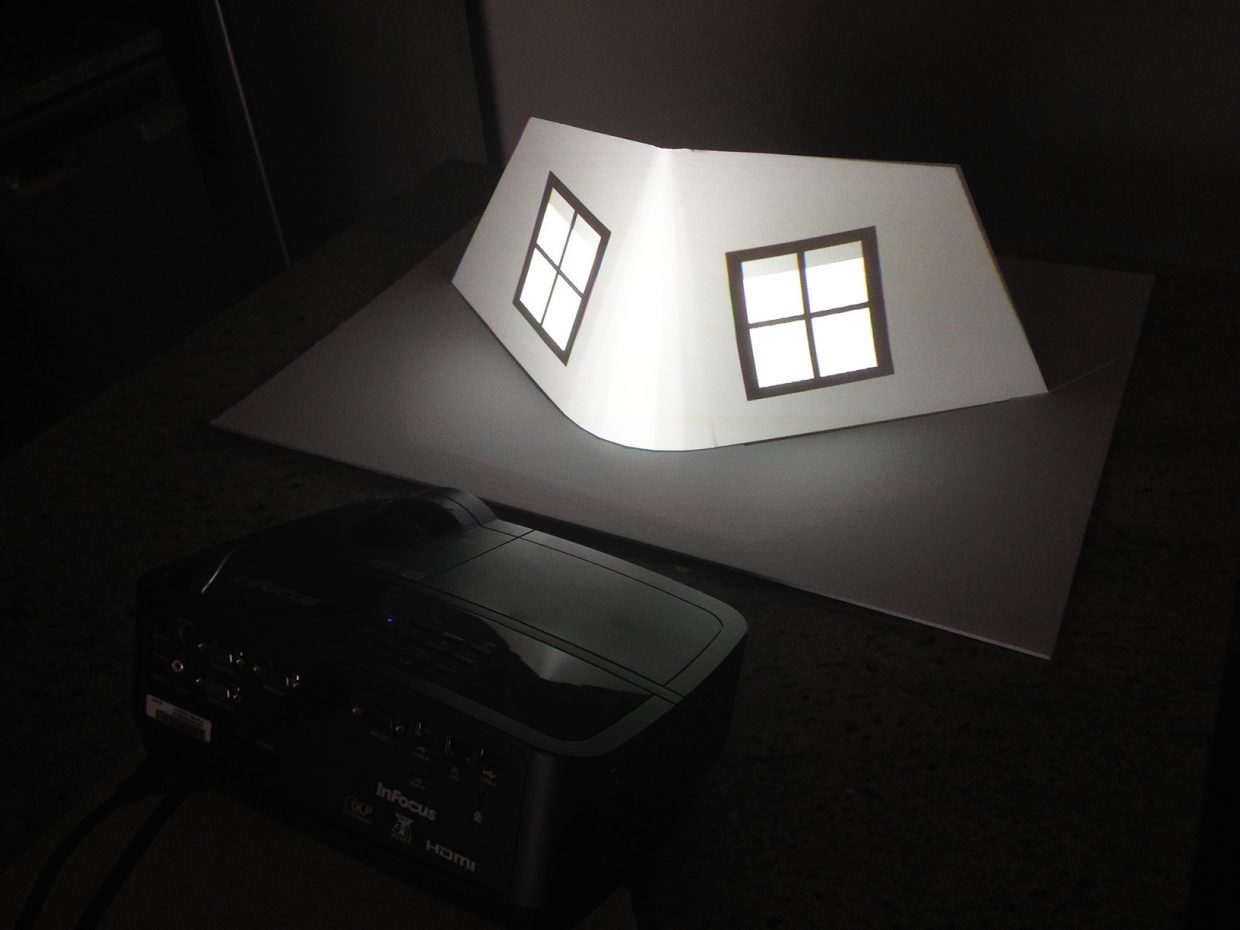

I call the prototype a lightpuppet. It is what it sounds like – a puppet made of light. The opposite of a shadow puppet.

The core technology behind the prototype leverages the light from a projector, and a fundamental concept called projection mapping. Also known by the name augmented special reality.

Projection mapping, as an art, is nothing new. Disney’s been using projection mapping on castles, main street, Pandora mountains, and giant trees for years. Or as faces, such as the Seven Dwarfs Mine Train faces. Obscura Digital likes to projection map onto things like the Empire State Building. The first architectural projection I ever saw was on a cathedral in Amiens, France, where light painted the statue work with the coloring from the Cathedral’s original construction.

A projection map in the Maya exhibit at the Perot Museum of Nature and Science in downtown Dallas. The projection paints the stone in a pre-recorded animation.

In almost all of these cases, the projectors cast pre-rendered and recorded media upon these buildings. Often in match step to sound and even fireworks.

Projection Mapping in these cases rarely encompasses real time generated, interactive content.

Not that it doesn’t exist.

Technologies like render caves using real time projection mapping to create a 360 degree viewing environment for digital content. These systems projection map game engines through multiple warped and blended projectors to screens all around you.

The Elumanti produced a Unity3d plugin for warped domes.

Andrew MacQuarrie created a Unity3d single projector projection mapping plugin as a part of a google summer of code project.

Microsoft research produced the RoomAlive, with turns a common room into an interactive projection map experience.

And many museum experiences use multiple projectors with interactive content for the likes of maps, globes, cultural expressions, and others. The Museum at the September 11th memorial in New York hosts several fantastic examples of the latter.

But in most of these cases, we have yet to witness a viable release of the interactive variety into the same scale and imagination that it’s prerecorded brethren enjoy. We have not yet gotten to paint Cinderella’s Castle in our favorite colors. Or cast spells within the Wizarding World of Harry Potter, with persistent lighting effects that last longer than just a few seconds.

Interactive projection mapping can do that.

My goal is to create a toolset that enables this technique to find it’s way into the park. The technology is there – but the proper development path remains unexplored.

You have to start somewhere. And for me, that start is a prototype. A teaser for what’s to come. Something I can show to the creative and technology firms that serve the theme park world. A starting point for my software development.

That start is a thing I call a lightpuppet. A pair of windowpanes that come to life.

My first lightpuppet – the windowpanes come to life and are controlled through a webgui on your mobile device

The genesis of this prototype, in many ways, captures the breadth and grandeur of my cross country travel.

Because it was in a bookstore in Boulder, Colorado where this all started. In a room that I felt like I could not leave, until I found the book I was waiting to read. Or it found me. That book was Jim Henson’s biography.

And because it was somewhere in an Airbnb in Chicago, while I was reading a chapter in Jim Henson’s biography, that I stumbled across a paragraph about Jim’s first Muppets. About how he slightly crossed the eyes to give them human expression.

That was when I remembered my film training. I remembered how much emotional information comes through the expression of the eyes. And somewhere in that moment between Muppets, my past in film, and staring at a windowpane in an Airbnb in Chicago that I first thought of this lightpuppet. I thought of a pair of windows that come to life, through a cartoon expression of the eye. And I knew projection mapping was the way to bring this vision to life.

I started to teach myself Unity3D. And it was somewhere in Cincinnati that I first figured out how to warp custom meshes. Somewhere later in Chicago when I built the web-GUI puppet controller for my mobile phone. And somewhere in Pittsburgh that I first tried to calibrate a projector – only to realize my technique wasn’t good enough.

At that moment in Pittsburgh I realized I was a far way from a working prototype. But I also felt I was onto something.

So I made the commitment. To work full time on the software necessary, starting at the beginning of the year.

Some months later, at the IAAPA trade show in Orlando, I saw the Mystique projection mapping tools from Christie. I saw pose estimation through structured light and computer vision for the first time. Walking away from the show, I realized I would need to add automatic calibration to my toolset.

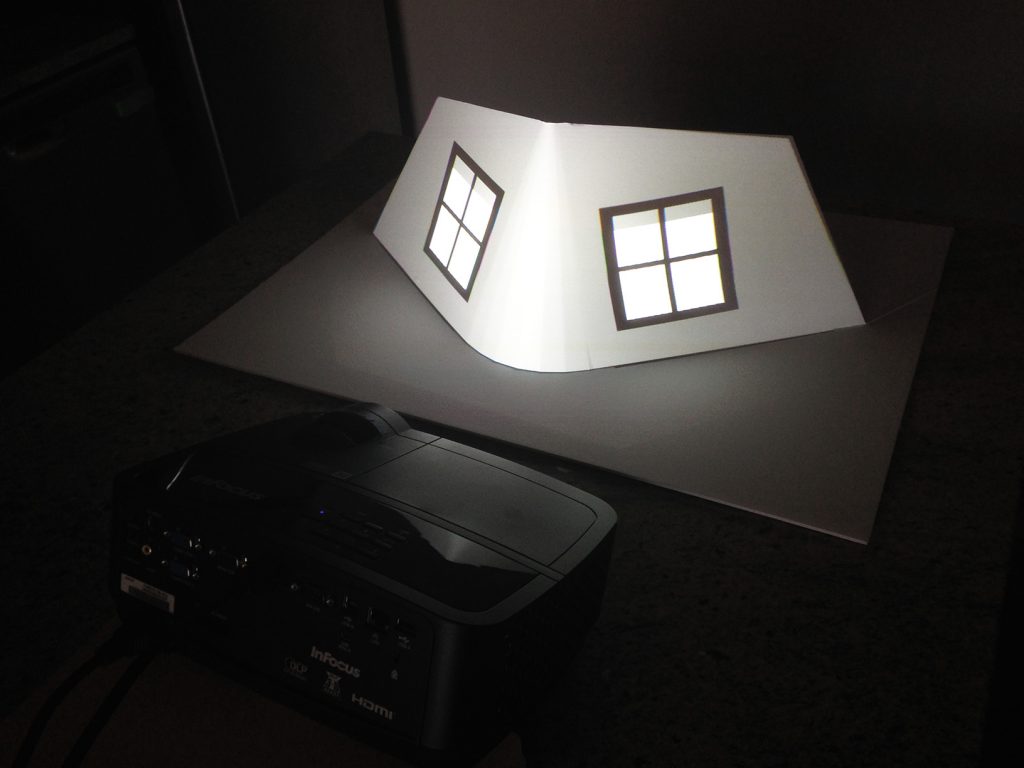

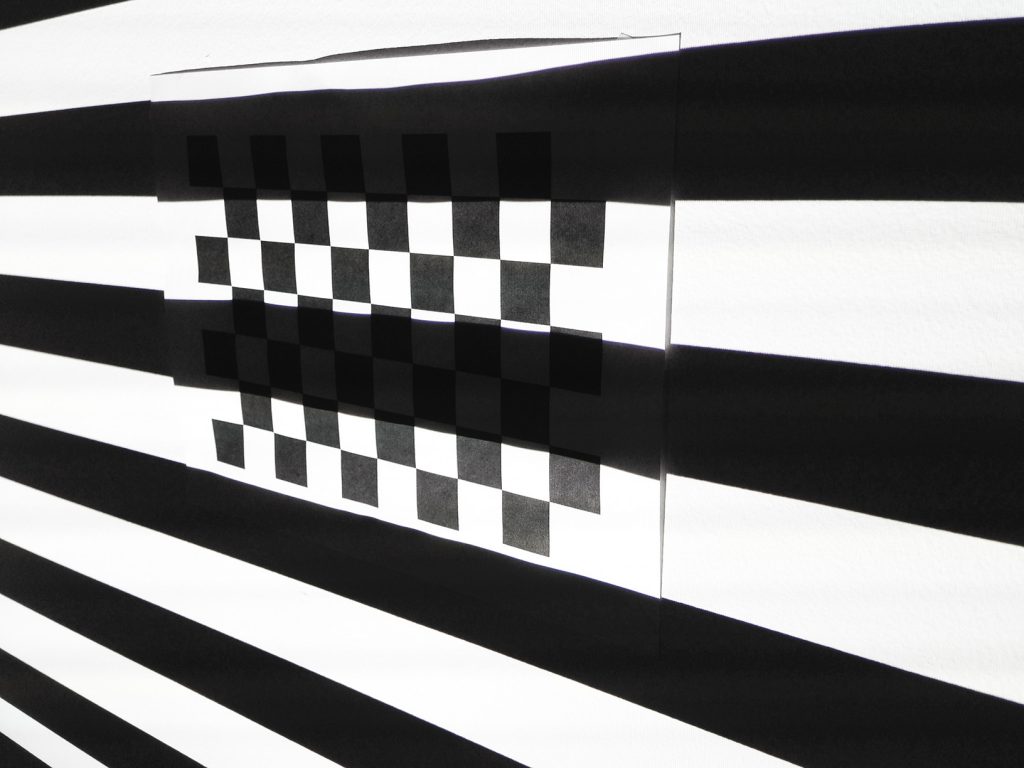

Structured Light. The projector’s pixel locations are found by detecting lines drawn on a checkerboard pattern. Green lines show the algorithm’s detected line locations.

Structured Light on a checkerboard pattern. A sequence of images with bands of different widths are used to detect projector pixel locations.

Projector and Raspberry Pi camera rig for structured light testing and development. Taken from a post two weeks ago.

And then January came. I settled down, nomad style, in Texas, and got to work. I built my interfaces to the OpenCV libraries from Unity3D. I built automatic camera tools for a raspberry pi. I build structured light rendering in Unity3D. And I built checkerboard calibration tools in Unity as well.

I spent weeks working on line detection. On checker corner location finding. When I got to the end of the implementation, what I found was a solution that somewhat, but not perfectly, worked. I couldn’t perfectly find the corners. It was not a success.

Detected checkerboard corners from the projector’s perspective. If you look closely, the positions are close…but still slightly off by a pixel or two.

But I was optimistic. I hoped that, perhaps, the imperfection was consistent enough to still yield a usable calibration.

And the calibration values I saw were fairly reasonable.

So I moved on to pose estimation. The process of moving my lightpuppet in the digital world onto the surface in the physical world.

And it worked. Kind of.

But not well enough.

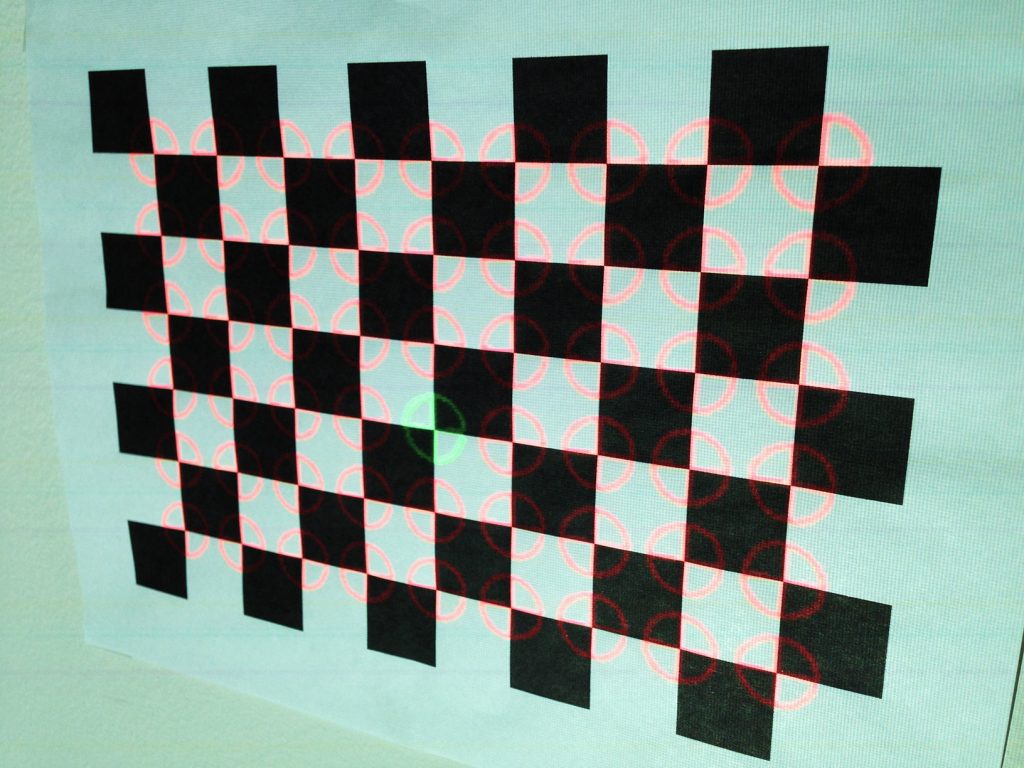

The corners are still off in many places. And the window panes are over-pixelated. But because of the misalignment, the corners of the window panes improperly skew at the outer edges.

Imperfect projection mapping. When aligning the orientation points, the projected model overshoots the physical foamboard model, castling light on the wall behind. The edges of the windowpanes are slightly curved towards the nose.

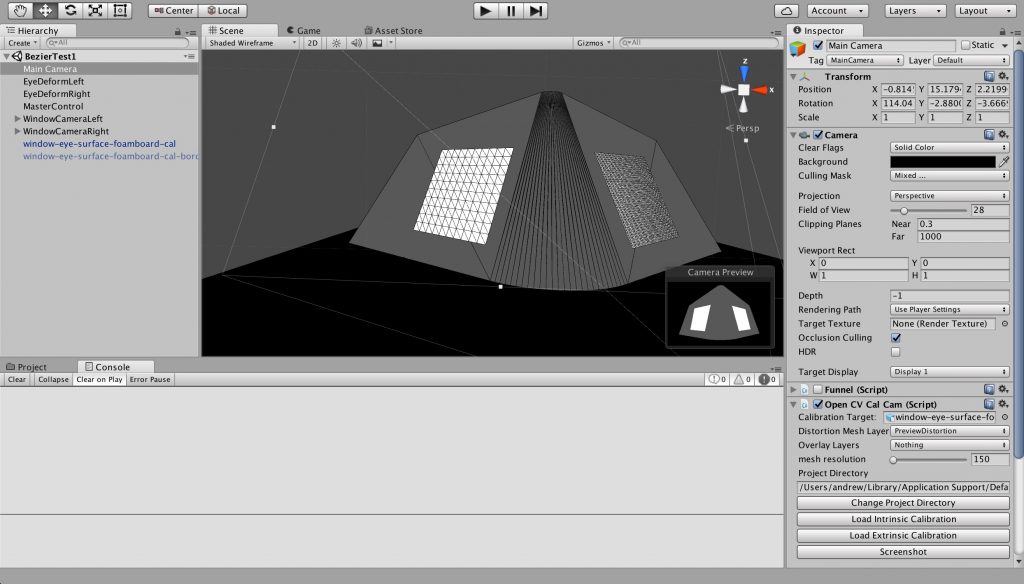

The model and windowpane rig in a Unity3D scene – with projector calibration and orientation applied to the Unity3D output camera

This was supposed to be the prototype I took into the design firms. Into the tech firms. To inspire them to use my technology. To try and find a first project, or a first client.

But I just don’t think it’s good enough. I don’t think that it inspires magic the way it should. The imperfections get in the way.

I learned my lesson from the last time I shopped around prototypes. Showing early work can inspire a lack of confidence. It can work against you rather than for you.

So here I am, ten weeks in, without a working solution. Or at least something worth showing. It is, in many senses, a failure. I failed to meet my goal.

Of course, when I took on the project, I understood the risk. A failure was very much a possibility. So I’m not surprised about where I’ve arrived. Though I am a little disappointed.

That being said, in many ways I’ve found success in the project as well. I now understand how projection mapping works, and some of the problems that come with it, in a way I never did before. I understand how structured light works. And I definitely understand Unity3D and C# in a way I never did before. I expect these tools will be useful down the road.

For all these reasons, and likely more, my failure is also my success. That is why I like to call this first lightpuppet a successful failure.

As for where to go from here with my lightpuppet, it’s hard to say. On the one hand, I can double down and attempt to address the problems in calibration. In theory, if I can precisely calibrate, I can precisely orient my lightpuppet as well. But there’s still a risk there.

On the other hand, there are other tech art projects I’d like to tackle. Perhaps they should occupy my time for a little while.

But no matter what comes next, in all of this uncertainty, at least one thing is true. Reliable. And certain. At least there will always be laundry to carry me through and through.